Project goals

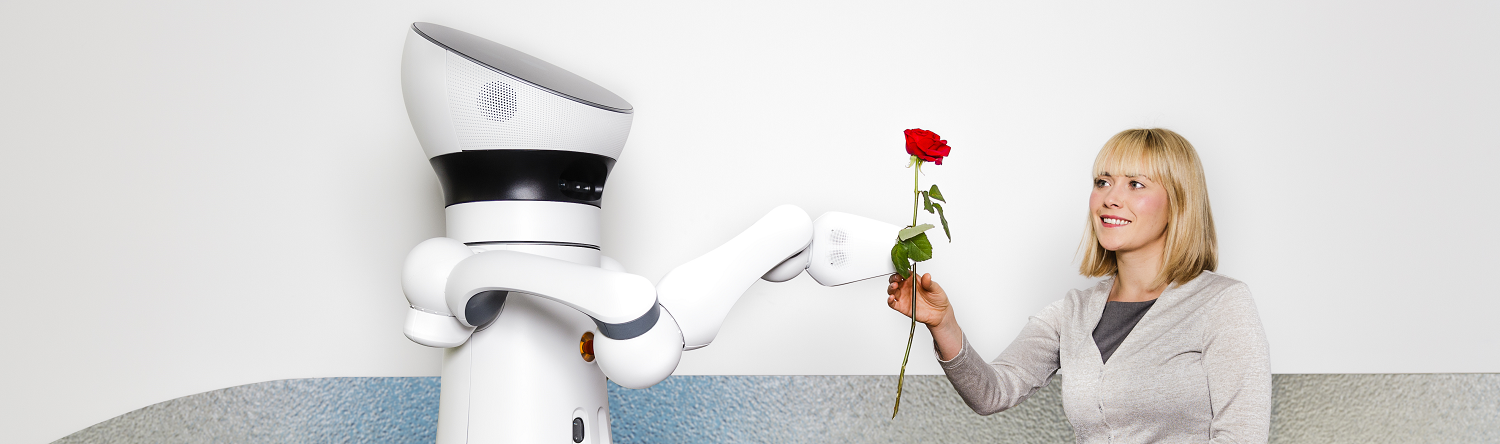

Accepting an object or handing it to another person: What is one of the most natural, simplest actions for humans is a difficult matter for robots. It is not only the physical interaction that requires a certain sensitivity. Where does the other look, is he/she approachable? Unlike robots, humans subconsciously perceive signals that reflect the attention of their counterparts and behave accordingly. In the newly launched collaborative project "Attention-sensitive assistance robots: Interactive Basic Skills" (ASARob), researchers are working on bringing this interpersonal sensitivity to robots. This way, robots will be able to provide effective assistance in everyday situations.

Contributions and methodology

Fraunhofer IOSB contributed the multimodal recognition of the user's visual focus of attention and activity. The real-time capable methods were developed during the project and integrated into the overall architecture of the robot. The user's focus of attention was detected based on a robust realtime head pose recognition as well as object a monitoring of the robot's surrounding environment. In conjunction with the robot's dialog management system, a world model was generated in which possible attention targets of the user were continuously mapped and updated. By tracking user interaction with individual objects, head rotation, and dialog management, the user's attention could be accurately tracked.

The system was designed to meet requirements in a home environment, with application-oriented evaluation criteria developed in consultation with two geriatric centers. The aim was to enable robots to reliably detect and interpret the behavior, actions and linguistic context of users in order to provide meaningful and situation-appropriate assistance in everyday life.

Fraunhofer Institute of Optronics, System Technologies and Image Exploitation IOSB

Fraunhofer Institute of Optronics, System Technologies and Image Exploitation IOSB